Visual Recognition with Core ML

Classify images with Watson Visual Recognition and Core ML. The images are classified offline using a deep neural network that is trained by Visual Recognition.

Before you begin

Make sure that you have installed Xcode 9 or later and iOS 11.0 or later. These versions are required to support Core ML.

Getting the files

Use GitHub to clone the repository locally, or download the .zip file of the repository and extract the files.

Running Core ML Vision Simple

Identify common objects with a built-in Visual Recognition model. Images are classified with the Core ML framework.

- Open

QuickstartWorkspace.xcworkspacein Xcode. - Select the

Core ML Vision Simplescheme. - Run the application in the simulator or on your device.

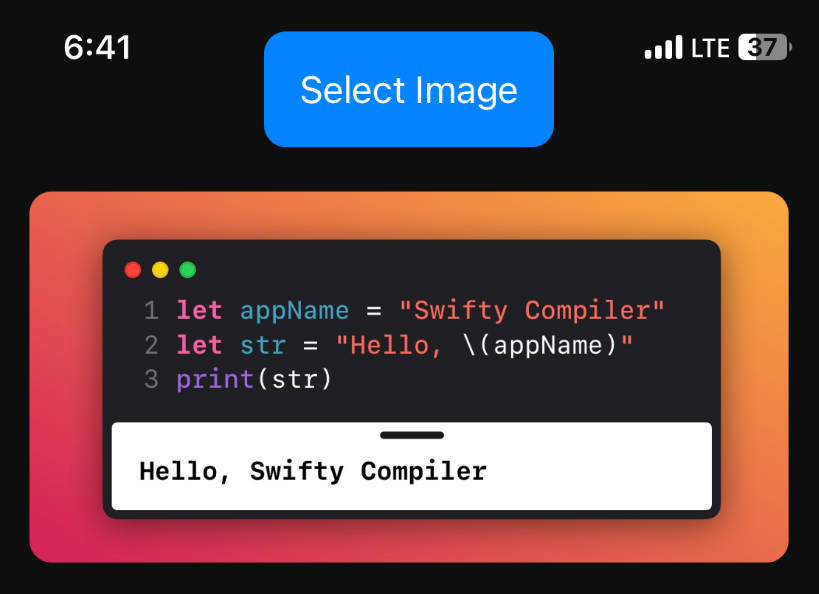

- Classify an image by clicking the camera icon and selecting a photo from your photo library. To add a custom image in the simulator, drag the image from the Finder to the simulator window.

Tip: This project also includes a Core ML model to classify trees and fungi. You can switch between the two included Core ML models by uncommenting the model you would like to use in ImageClassificationViewController.

Source code for ImageClassificationViewController.

Running Core ML Vision Custom

The second part of this project builds from the first part and trains a Visual Recognition model (also called a classifier) to identify common types of cables (HDMI, USB, etc.). Use the Watson Swift SDK] to download, manage, and execute the trained model. By using the Watson Swift SDK, you don't have to learn about the underlying Core ML framework.

Setting up Visual Recognition in Watson Studio

-

Log into Watson Studio. From this link you can create an IBM Cloud account, sign up for Watson Studio, or log in.

-

After you sign up or log in, you'll be on the Visual Recognition instance overview page in Watson Studio.

Tip: If you lose your way in any of the following steps, click the

IBM Watsonlogo on the top left of the page to bring you to the the Watson Studio home page. From there you can access your Visual Recognition instance by clicking the Launch tool button next to the service under "Watson services".

Training the model

-

In Watson Studio on the Visual Recognition instance overview page, click Create Model in the Custom box.

-

If a project is not yet associated with the Visual Recognition instance you created, a project is created. Name your project

Custom Core MLand click Create.Tip: If no storage is defined, click refresh.

-

Upload each .zip file of sample images from the

Training Imagesdirectory onto the data pane on the right side of the page. Add thehdmi_male.zipfile to your model by clicking the Browse button in the data pane. Also add theusb_male.zip,thunderbolt_male.zip, andvga_male.zipfiles to your model. -

After the files are uploaded, select Add to model from the menu next to each file, and then click Train Model.

Copy your Model ID and API Key

- In Watson Studio on the custom model overview page, click your Visual Recognition instance name (it's next to Associated Service).

- Scroll down to find the Custom Core ML classifier you just created.

- Copy the Model ID of the classifier.

- In the Visual Recognition instance overview page in Watson Studio. Click the Credentials tab, and then click View credentials. Copy the

api_keyof the service.

Adding the classifierId and apiKey to the project

- Open the project in XCode.

- Copy the Model ID and paste it into the classifierID property in the ImageClassificationViewController file.

- Copy your api_key and paste it into the apiKey property in the ImageClassificationViewControllerfile.

Downloading the Watson Swift SDK

Use the Carthage dependency manager to download and build the Watson Swift SDK.

-

Install Carthage.

-

Open a terminal window and navigate to the

Core ML Vision Customdirectory. -

Run the following command to download and build the Watson Swift SDK:

carthage bootstrap --platform iOS

Tip: Regularly download updates of the SDK so you stay in sync with any updates to this project.

Testing the custom model

-

Open

QuickstartWorkspace.xcworkspacein Xcode. -

Select the

Core ML Vision Customscheme. -

Run the application in the simulator or on a device.

-

Classify an image by clicking the camera icon and selecting a photo from your photo library. To add a custom image in the simulator, drag the image from the Finder to the simulator window.

-

Pull new versions of the visual recognition model with the refresh button in the bottom right.

Tip: The classifier status must be

Readyto use it. Check the classifier status in Watson Studio on the Visual Recognition instance overview page.