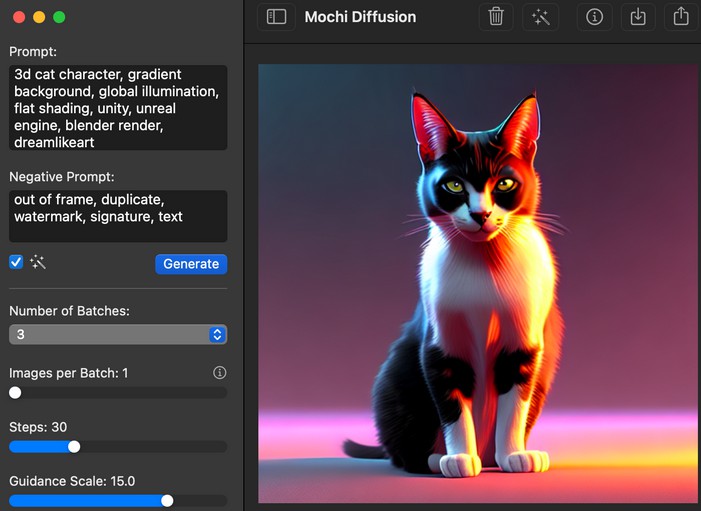

Mochi Diffusion

Run Stable Diffusion on Apple Silicon Macs natively

Description

This app uses Apple’s Core ML Stable Diffusion implementation to achieve maximum performance and speed on Apple Silicon based Macs while reducing memory requirements.

Features

- Extremely fast and memory efficient (~150MB with Neural Engine)

- Runs well on all Apple Silicon Macs by fully utilizing Neural Engine

- Generate images locally and completely offline

- Generated images are saved with prompt info inside EXIF metadata

- Convert generated images to high resolution (using RealESRGAN)

- Use custom Stable Diffusion Core ML models

- No worries about pickled models

- macOS native app using SwiftUI

Releases

Download the latest version from the releases page.

Running

When using a model for the very first time, it may take up to 30 seconds for the Neural Engine to compile a cached version. Afterwards, subsequent generations will be much faster.

Compute Unit

CPU & Neural Engineprovides a good balance between speed and low memory usageCPU & GPUmay be faster on M1 Max, Ultra and later but will use more memory

Depending on the option chosen, you will need to use the correct model version (see Models section for details).

Models

You will need to convert or download Core ML models in order to use Mochi Diffusion.

A few models have been converted and uploaded here.

- Convert or download Core ML models

split_einsumversion is compatible with all compute unit options including Neural Engineoriginalversion is only compatible withCPU & GPUoption

- By default, the app’s working directory will be created under the Documents folder. This location can be customized under Settings

- In the working folder, create a new folder with the name you’d like displayed in the app then move or extract the converted models here

- Your directory should look like this:

~/Documents/MochiDiffusion/models/[Model Folder Name]/[Model's Files]

Compatibility

- Apple Silicon (M1 and later)

- macOS Ventura 13.1 and later

- Xcode 14.2 (to build)

Privacy

All generation happens locally and absolutely nothing is sent to the cloud.

Credits

- Apple’s Core ML Stable Diffusion implementation

- HuggingFace’s Swift UI sample implementation

- App Icon by Zabriskije