PreciseDecimal

Precise JSON decimal parsing for Swift

Introduction

Swift has long suffered a problem with its Decimal type: unapparent loss of precision.

This happens with all common ways of initializing:

let badDecimal = Decimal(3.133) // 3.132999999999999488

let badDecimal: Decimal = 3.133 // 3.132999999999999488

But not these ones:

let goodDecimal = Decimal(string: "3.133") // 3.133

let goodDecimal = Decimal(sign: .plus, exponent: -3, significand: 3133) // 3.133

Furthermore, this also applies to JSON decoding since it uses NSJSONSerialization under the hood, which is presumed to parse decimal numbers as Double and then initializing a Decimal via its lossy Double initializer as exemplified above. A common workaround for this is to receive sensitive Decimal values as strings and parsing into Decimal with the working string initializer, however oftentimes the format of a JSON payload is out of one's control.

This is something that Apple will most likely fix at some point. In the meantime, PreciseDecimal has your back.

Usage

This library declares a lightweight PreciseDecimal type as a wrapper around Decimal, with precise init and Decodable implementations.

let goodDecimal = PreciseDecimal(3.133) // 3.133

let goodDecimal: PreciseDecimal = 3.133 // 3.133

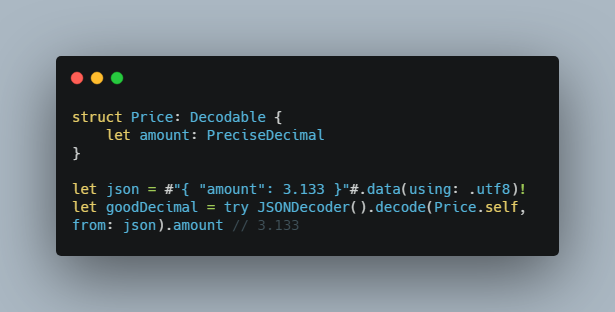

struct Price: Decodable {

let amount: PreciseDecimal

}

let json = #"{ "amount": 3.133 }"#.data(using: .utf8)!

let goodDecimal = try JSONDecoder().decode(Price.self, from: json).amount // 3.133

Try it out!

PreciseDecimal supports Arena to effortlessly take it for a spin in a playground before you decide to add it to your codebase.

Simply install Arena and run arena davdroman/PreciseDecimal --platform macos in your terminal.