Queryable

The open source version of Queryable, an iOS app the CLIP model on iOS to search the Photos album offline.

Unlike the search function in the iPhone’s default photo gallery, which relies on keywords, you can use natural sentences like “a dog chasing a balloon on the lawn” for searching in Queryable.

Performance

final.mp4

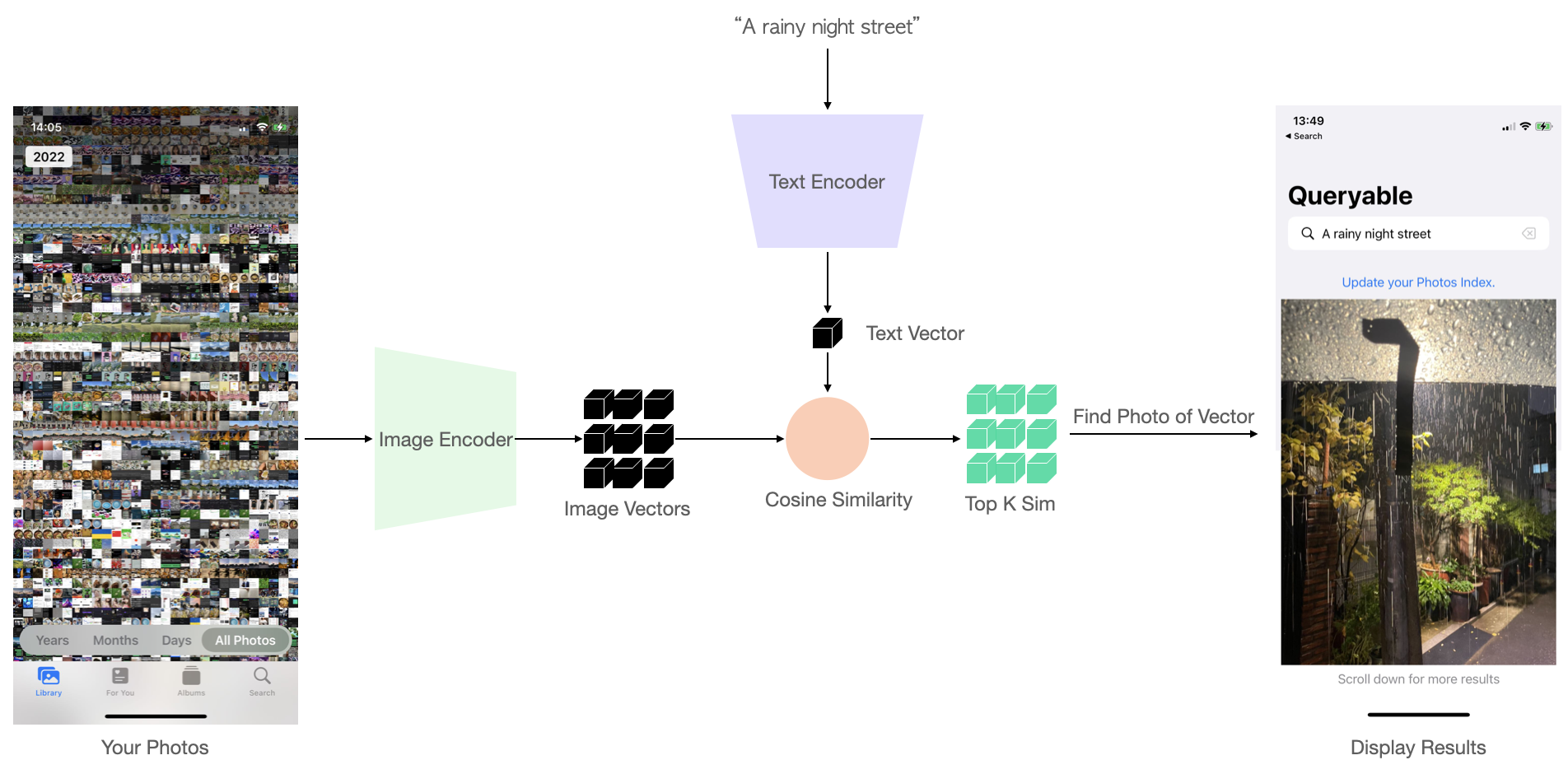

How does it work?

First, all photos in your album will be processed one by one through the CLIP Image Encoder, obtaining a set of local image vector. When you input a new text query, the text will first pass through the Text Encoder to obtain a text vector, and this will then be compared with the stored image vectors for similarity, one by one. Finally, the top K most similar results are sorted and returned. The process is as follows:

For more details, please refer to my article Run CLIP on iPhone to Search Photos.

Run on Xcode

Download the ImageEncoder_float32.mlmodelc and TextEncoder_float32.mlmodelc from Google Drive.

Clone this repo, put the downloaded models below CoreMLModels/ path and run Xcode, it should work.

Contributions

You can apply Queryable to your own business product, but I don’t recommend modifying the appearance directly and then listing it on the App Store. You can contribute to this product by submitting commits to this repo, PRs are welcome.

If you have any questions/suggestions, here are some contact methods: Discord | Twitter | Reddit: r/Queryable.

License

MIT License

Copyright (c) 2023 Ke Fang